How was your long weekend? I spent it avoiding Black Friday recounting stories of my favorite designers and engineers. In their previous lives, they had degrees in math or music (or both!), which informed their approach to their current jobs. That inspired me to finally edit this post. For the content strategy and design community, I want to take a break from yapping about distributed coherence to do a little integrated studies time that can help us define it a little better.

The jazz metaphor I threw down a few weeks back to explain distributed coherence in “Beyond Single Source of Truth” started as just a nice analogy. There’s actual math behind it—math that Claude Shannon worked out in 1948 while jazz musicians were already living it on bandstands. However, it wasn’t a necessarily a clear metaphor if you’ve never been into jazz or math! So while I address the engineers and musicians in the crowd, I hope I can bring the rest of us along for the ride.

Short version: sending the same thing (signal) everywhere is suboptimal. Shannon proved it with equations. Miles Davis showed it with Kind of Blue. Content strategists have known this for years. Enterprises keep overruling them anyway.

Think in genres

Classical music works like traditional content publishing. The score is the source. Performers execute it faithfully. Deviation is failure.

Jazz works like distributed coherence. Musicians share a structure—key, changes, tempo, form—and interpret it for the moment. They respond to each other, to the room, to what’s actually happening.

One model assumes context doesn’t matter. The other assumes it’s everything.

Content strategy has always understood this. The pressure for “consistency” comes from somewhere else—legal wanting identical disclaimers everywhere, brand wanting the same hero message on every surface, IT wanting one template to rule them all. The enterprise wants standardization because standardization is easier to manage.

“Easy to manage” isn’t the same as effective.

Information theory for everybody

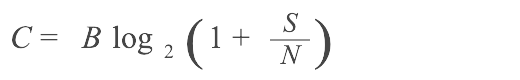

Now, something for the STEM kids. Shannon–Hartley formalized this idea with the channel capacity theorem:

- C = capacity, or how much information you can actually transmit

- B = bandwidth

- S/N = signal-to-noise ratio

The amount of information you can actually transmit depends on channel bandwidth AND signal-to-noise ratio. Not just what you send—what gets through.

What it means for content

1. Capacity is channel-specific.

Mobile isn’t just “smaller desktop.” It has different bandwidth (screen size, session length, attention availability) and different noise (notifications competing, environmental distractions, divided attention). The capacity is different. Content optimized for desktop and shipped to mobile isn’t “consistent”—it’s miscalibrated.

2. Noise isn’t just clutter—it’s anything that isn’t the signal.

Legal disclaimers, navigation chrome, promotional banners, “helpful” related content—all noise relative to the user’s actual task. Every element that isn’t the signal reduces capacity for the signal. That cookie banner isn’t free. It costs you transmission capacity for the thing you actually wanted to communicate.

3. Adding more signal hits diminishing returns.

The log function is brutal. Doubling your signal strength doesn’t double capacity—it adds one bit. Cramming more content onto the page doesn’t linearly increase information transfer. After a point, you’re just generating noise for yourself.

4. You can’t overcome bad signal-to-noise by just shouting.

Making the headline bigger, bolding more text, adding exclamation points—you’re not increasing signal, you’re increasing noise floor. When everything is emphasized, nothing is. The S/N ratio stays the same or gets worse.

5. Context is part of the signal.

Content without context forces users to do work to decode it. That work is cognitive noise. “Click here to learn more” has near-zero information content. “Download the pricing PDF” has high information content. Same bandwidth, radically different S/N.

The practical takeaway:

Your job isn’t to “publish more content”. It’s to maximize information transfer given the channel constraints.

That means:

- Reduce noise (strip what isn’t signal for this user in this moment)

- Match encoding to channel (adapt expression to context)

- Accept that identical content = suboptimal transfer everywhere

- Measure comprehension and task completion, not just delivery

Shannon proved you can’t escape the equation. You can only work with it. He proved this sixty years ago what content strategists have been saying it for decades. Enterprises keep building systems that ignore it because those systems are easier to build and govern. But remember what we said about easy.

What optimization actually looks like

Stripe gets this. Their API documentation detects what programming language you’re using and shows examples in that language. Same concept, different expression, zero cognitive overhead translating between contexts. The information is identical. The encoding matches the channel.

Duolingo gets this. Their push notifications are playful and, yes, quite guilt-trippy (”These reminders don’t seem to be working. We’ll stop sending them.”) because that channel tolerates personality. Their error messages during lessons are clear and direct because that context demands clarity. Same brand, different S/N ratios, different optimization.

Slack’s onboarding adapts to team size. A 5-person team doesn’t need the same setup flow as a 500-person organization. The goal is identical—get people using Slack. The encoding varies because the contexts are different.

These aren’t exceptions or special cases. They’re what optimization looks like when you’re allowed to do it.

What noise actually looks like

We talk a lot about optimization without calling out the noise it came from, for example:

- Cookie consent banners that dump 2,000 words of legal text on someone who just wants to read an article. The legal department got their “consistency”—identical language everywhere. The user got noise. Information buried in cognitive load. Shannon would call this a transmission failure.

- Enterprise software that crams desktop interfaces into mobile apps. Every feature present, nothing adapted. Users pinching and zooming through workflows designed for mice. The content is “consistent.” It’s also unusable. The signal-to-noise ratio collapsed because no one optimized for the channel.

- Onboarding flows that explain every feature before letting you do anything. “But users need to know all the capabilities!” No—users need to accomplish their first task. Front-loading everything is noise. It’s optimizing for the org chart (every team gets their feature mentioned) instead of the user’s context (I just want to send one message).

- Error messages written in legal-ese. “An unexpected error has occurred. Please contact your system administrator.” Sometimes they think it’s less jargon to say “Something happened” with no information at all! That’s not signal. That’s the organization protecting itself at the user’s expense. The user needed: what happened, what it means for them, what to do next. They got: liability coverage.

- Help content written for power users, shown to everyone. “To configure the webhook payload, modify the JSON schema in your integration settings.” Shown to someone who just wanted to connect their calendar. The content exists. The user can’t decode it. Noise.

These aren’t content strategy or design failures. They got overruled by consistency requirements, legal review, template constraints, capacity issues, “that’s how we’ve always done it.”

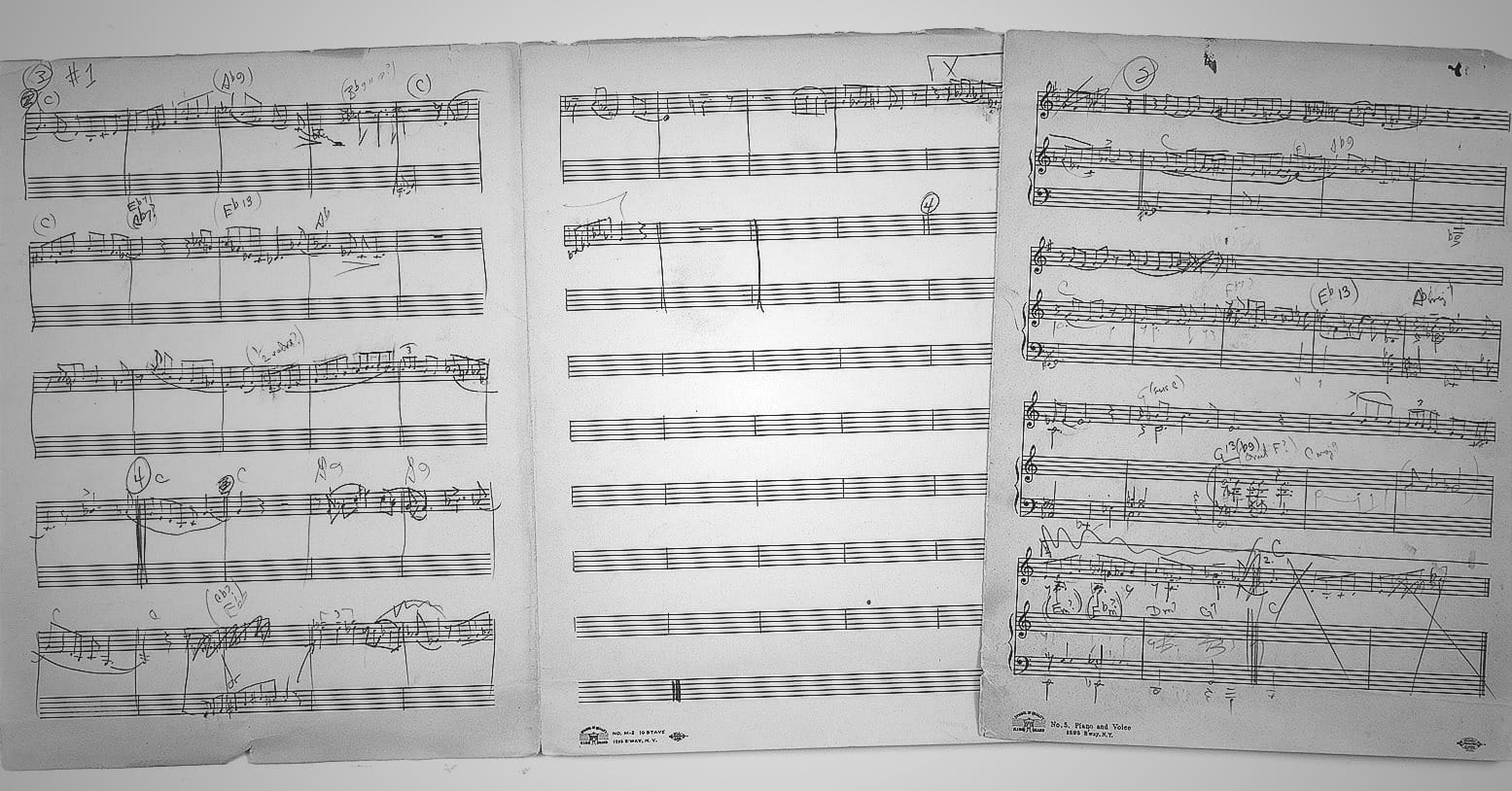

Kind of Blue?

Jazz musicians didn’t need the equation. They knew that what works in a small club doesn’t work in a concert hall. Same song, different expression. The math just explains why.

Miles Davis didn’t hand his musicians complex arrangements. He gave them modes—scales to work within. Simpler constraint, more freedom inside it.

That’s the move from templates to principles.

- Template: play these exact notes.

- Mode: these notes are available—make music.

Coltrane, Cannonball, and Bill Evans played completely different solos on that record. It’s unmistakably coherent. Same constraints, different executions, unified result.

Information theory explains why this works: reducing constraint complexity while maintaining structural boundaries increases transmittable information. The mode is the principle. The solo is the contextual expression.

Coherence comes from the constraints, not from identical output.

Create a real book

The fake book standardized jazz—not by standardizing performances, but by codifying the minimum shared structure for coherence.

A lead sheet gives you:

- Melody (reference content—don’t change this)

- Chord changes (principles)

- Form (structure)

Two musicians who’ve never met can play together because they share this framework. They won’t play the same thing. They’ll play appropriately while staying coherent.

That’s a protocol. Minimum shared spec that enables collaboration without dictating output.

TCP/IP doesn’t tell you what to send. It tells you how sending works. A lead sheet doesn’t tell you what to play. It tells you how playing together works.

Content principles should do the same thing. But enterprises keep confusing the protocol with the message. They standardize the output instead of the constraints.

Coherence markers

Both Miles Davis and Thelonious Monk filled compositions with notes that sound “wrong” by classical standards. Dissonances. Weird intervals. Rhythms that feel off.

They’re not mistakes. They’re signal.

Monk’s musicians could tell intentional weirdness from actual mistakes because they understood the deeper coherence. They had the context to decode the signal.

Expected signals carry almost no information—you already knew they were coming. Predictable content is low-entropy. It confirms what users expected.

Unexpected signals carry more. Contextual variation is high-entropy—it carries meaning because it responds to the situation instead of replicating a template.

- In rigid systems, unexpected = error.

- In flexible systems, unexpected = meaning.

This is why you need coherence markers. Without them, you can’t tell adaptation from drift. With them, teams can use the full expressive range—including variations that would look like “errors” to someone expecting identical content everywhere.

The enterprise sees Monk’s wrong notes and files a bug report.

Redundancy

Shannon proved redundancy enables error correction. Repeat the message, encode it with extra information, and the receiver can recover from the noise.

Jazz has built-in redundancy. The changes repeat. The form cycles. The rhythm section holds the foundation. That’s not waste—it’s the safety net that makes improvisation possible.

Soloist’s phrase doesn’t land? Structure continues. Next phrase recovers. Redundancy creates room for risk.

Enterprise publishing has no redundancy. If the centralized content doesn’t fit the context, you get garbage and call it consistency.

Distributed coherence has principled redundancy. Multiple expressions of the same intent, each adapted, each recoverable. The principles persist even when individual expressions miss.

Feedback

Systems that adapt based on feedback outperform systems that don’t. Wiener’s cybernetics showed that working in your nervous system, in thermostats.

This is why we need to approach content work as a living practice. Content risks being treated like an inanimate object, or worse than a thermostat! Most migration or redesign projects I saw run into issues had no true feedback loop. Sure, the mechanics were there, just not the incentives. Source publishes. Channels receive. Performance is measured. Research is rarely consulted to follow up. Adaptation happens outside the system and gets labeled “exceptions” and “technical debt.”

When content strategists say “this isn’t working in mobile,” they’re providing feedback. When the response is “that’s the approved content,” the feedback loop is broken.

Jazz is feedback. Musicians listen, respond, adjust. The drummer hears the soloist building and responds. The bassist hears the harmonic direction and supports it. Music emerges from continuous mutual adaptation.

Systems that don’t learn can’t improve. Monolithic content governance often doesn’t learn. It enforces.

Distributed coherence builds in feedback earlier with less meetings. Teams adapt, learn what works, refine patterns. A variation rationale log is literally a feedback mechanism—capturing why adaptations happen so decisions get smarter over time.

What Jazz Knows

Jazz musicians aren’t giving up on consistency. They’re achieving higher-order consistency. Notes change. Coherence doesn’t. Solos differ. Intent unifies.

Information theory supports this. Perfect reproduction only wins when conditions are identical and known. Real world—different users, channels, contexts, moments—adaptation within constraints beats rigid replication.

Content strategists have always known this. The discipline isn’t behind. It’s constrained—by governance that prizes uniformity over effectiveness, by legal requirements that demand identical language regardless of context, by technical systems built for single-source distribution.

The obstacle isn’t knowledge. It’s permission.

You can’t play sheet music into a void note for note and hopes it sounds good wherever it lands.

So yes, distributed coherence plays jazz with the real world.

This is a companion to the Distributed Coherence Toolkit.